By Kim Kotlar and Inés Jordan-Zoob. October 18, 2018. Cyberspace continues to present growing opportunities and challenges, for national security, global businesses, and individuals alike.…

Comments closedCategory: Cyber Blog

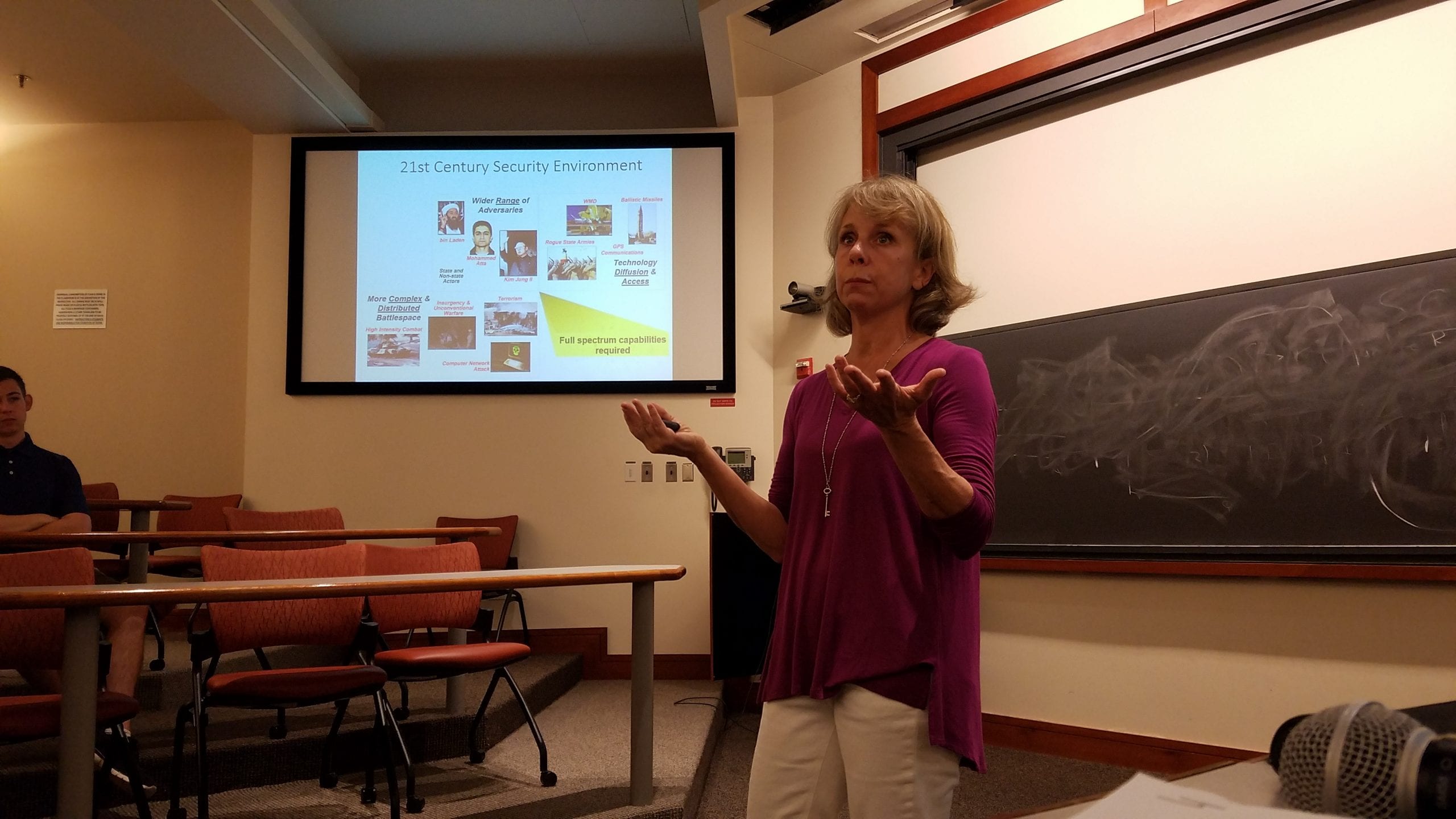

By Justin Sherman. October 14, 2018. On Thursday, October 11, Cyber Team Vice President Inés Jordan-Zoob [pictured above]—a senior double-majoring in political science and art…

Comments closedBy Mary Wang. October 4, 2018. If you’re any bit like me, the first images that come to mind at the word “cybersecurity” are Elliot…

Comments closedBy Justin Sherman. October 3, 2018. “Cyber” is changing everything around us, from international security to domestic surveillance to corporate advertising to the prosecution of…

Comments closed